[Skip the article - click here for the detailed guide]

In 2026, relying on cloud-based AI APIs is a structural risk to your business. Whether you are using ChatGPT for internal operations or embedding it into your product, the current model forces you to accept two major compromises: unpredictable variable costs and the exposure of proprietary data to third-party infrastructure.

By moving to local inference with frontier models like Qwen 3.5, you can eliminate recurring API fees and regain total control over your data.

The Real Problems with Cloud AI

-

Gross Margin Erosion: Cloud providers charge you per token. As your user base or internal activity grows, your costs scale linearly. This prevents you from achieving the high-margin scalability typical of a successful software company.

-

Data Sovereignty Risks: For UK businesses, sending sensitive client data or internal IP to a third-party server creates significant regulatory hurdles and potential GDPR violations. True privacy requires that data never leaves your physical or virtual custody.

-

Variable Latency: Round-tripping data to a cloud server adds hundreds of milliseconds to every response. For real-time applications or developer workflows, this lag is a massive bottleneck to productivity.

-

Third-Party Dependency: You are building on a “black box.” If a provider changes their pricing, deprecates a model, or experiences an outage, your business stops.

Why the 2026 Models Change the Game

We have reached the “Zero to One” moment where open-weight models are as capable as their proprietary counterparts. Loc.ai gives you immediate, free access to the world’s leading models:

-

Qwen 3.5 (35B-A3B): A “Mixture of Experts” model that provides the reasoning power of a massive 100B+ parameter model while only using the compute of a 3B model. It is faster and more efficient than almost anything in the cloud.

-

Llama 4 Scout: Featuring a massive 10-million-token context window, allowing you to process entire codebases or legal archives locally without the surcharges associated with “long-context” cloud calls.

The 5-Minute Setup Guide

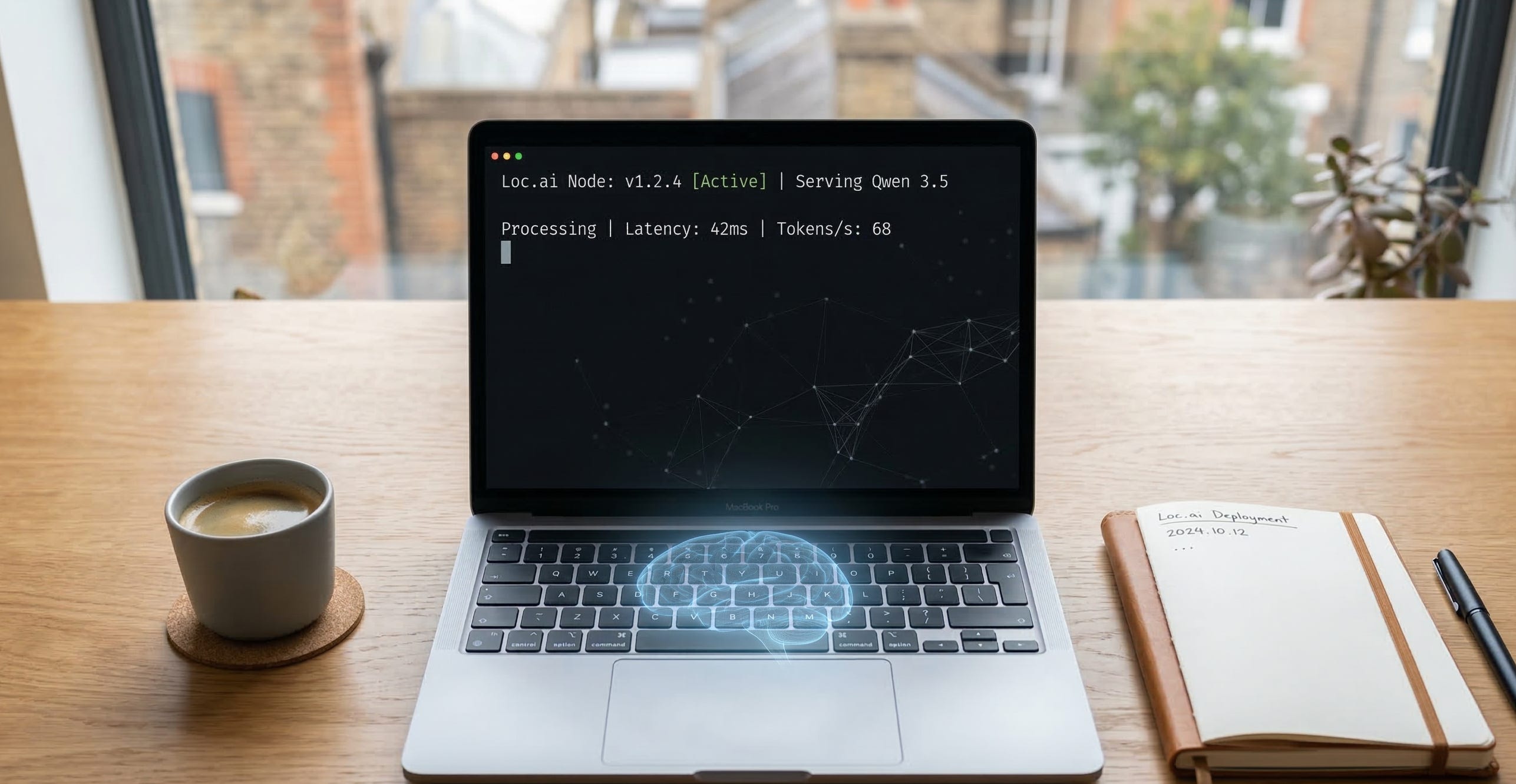

You don’t need an ML Engineering degree to reclaim your infrastructure. Loc.ai acts as your orchestration layer, handling the containers and model routing automatically.

Step 1: Connect Your Hardware Register your device (a local workstation, a Mac, or a private VM) with the Loc.ai platform. A single terminal command installs the agent and turns the machine into a secure local node.

Step 2: Deploy Your Model Choose from our library of pre-optimised models like Qwen 3.5 or DeepSeek. Hit “Serve” to create an OpenAI-compatible API endpoint that runs entirely on your own metal.

Step 3: Connect the Interface Launch Open WebUI—the leading open-source chat interface—and point it to your Loc.ai API Base URL. Your team now has a familiar, high-performance interface with no recurring costs.

[Click here for the copy-paste terminal commands and full configuration documentation]

Scale to the Enterprise

A single node is a proof of concept. For a high-growth organisation, the goal is Scaled Orchestration.

Loc.ai allows you to deploy these nodes across your entire network, routing traffic based on task complexity and security requirements. This is how you build an AI strategy that is fast, secure, and remains profitable as you scale.