Your data never leaves this device.

SafeChat is a privacy-native AI workspace built for secure businesses. Wholly browser-based — nothing to install. Every prompt, document and conversation stays on the device — by architecture, not by promise.

◆ Compliant by default

The status quo

Every prompt goes to Big Tech.

Every document. Every question. OpenAI. Google. Anthropic. Microsoft. They train on it. They store it. They own it.

Cloud AI today

- ×Every prompt is logged on someone else's server

- ×Every document becomes training data — or could

- ×Every conversation crosses jurisdictions you don't control

- ×Every renewal tightens the lock-in

SafeChat

- Prompts execute on the device, in the browser or via Loc.ai Link

- Documents are parsed client-side — PDF.js, mammoth.js, never uploaded

- Sessions stay on the device — keyed to your Loc.ai identity

- Zero telemetry. Zero conversation sync. Architecture, not policy.

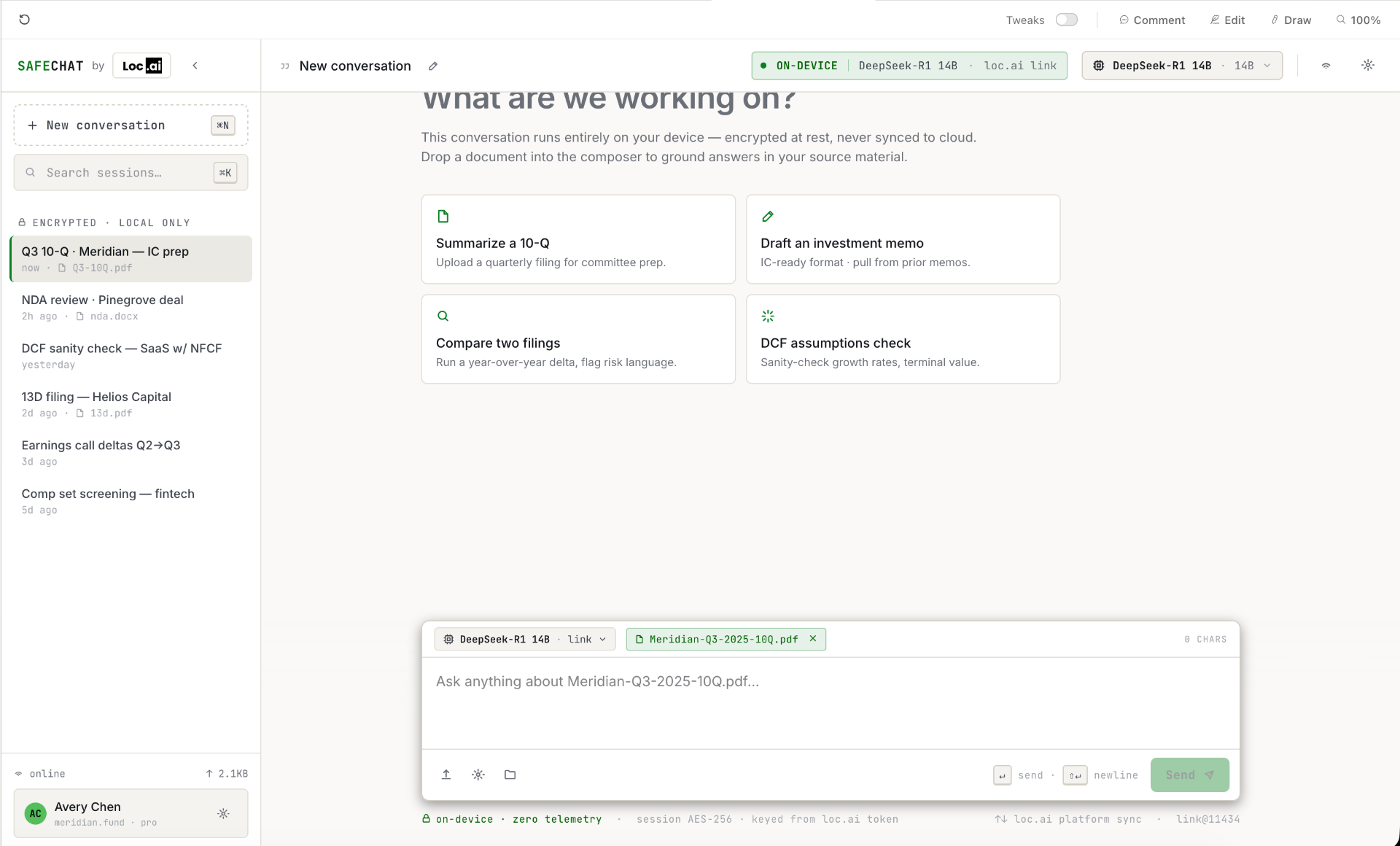

What's in the MVP

A private workspace. Not a consumer chatbot.

SafeChat is built for professionals who can't paste sensitive material into a public model — and shouldn't have to.

Two local inference modes

WebGPU runs Phi-3, Gemma, Mistral and Llama 3.1 8B straight in the browser. Loc.ai Link unlocks 13B+ models with no limit on the same device with one click.

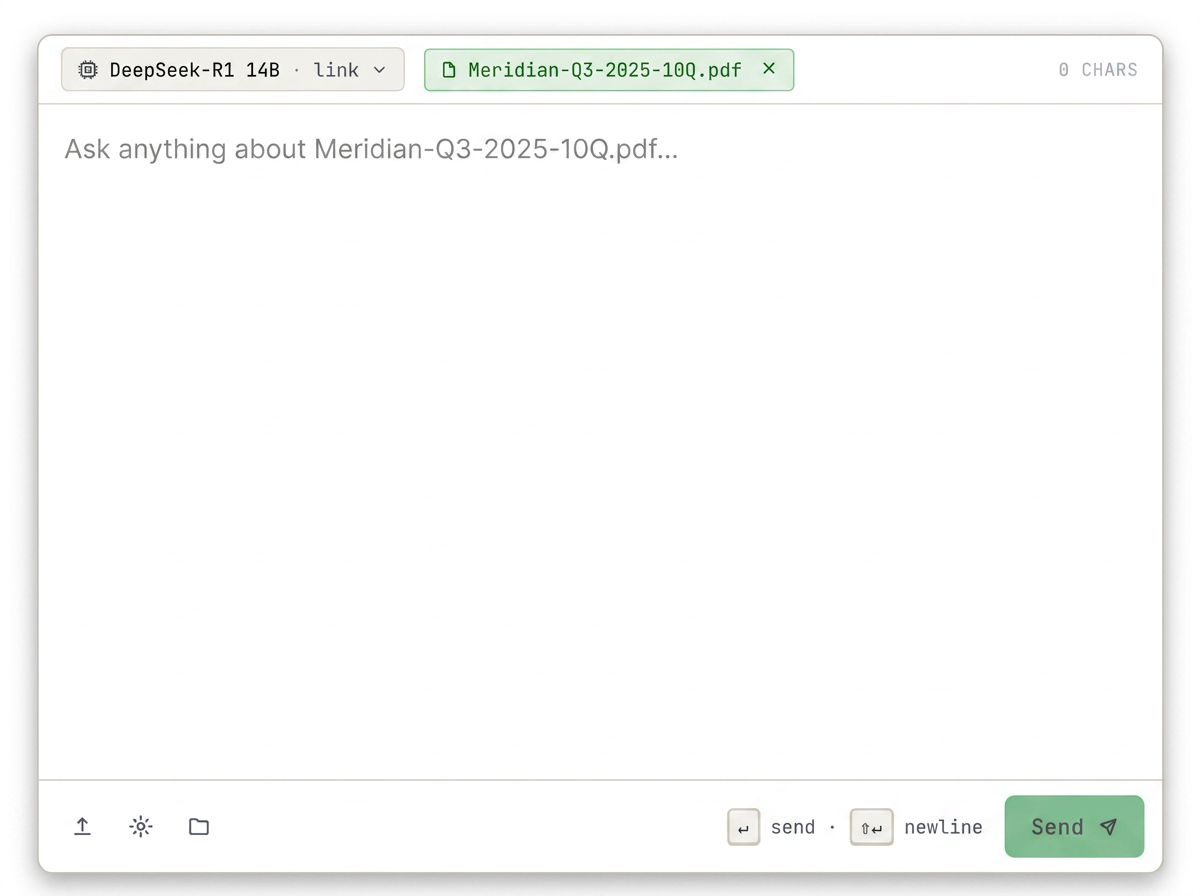

Document Q&A, fully on-device

Drop in a PDF, DOCX, TXT or Markdown file. Text is extracted in-browser and injected into the model's context window. Nothing transits a server.

Works offline, end-to-end

Inference, history and documents keep working with no internet connection. Cloud integrations degrade gracefully with clear UI state.

Trust signals built in

On-device badge, model name and zero-telemetry status bar are visible in every state of the app — not a footnote.

Loc.ai-native, SSO ready

Authenticate with your Loc.ai account or SAML/SSO. Model registry, license tier and Loc.ai Link status sync — conversation data never does.

Architecture

Two local compute modes. Zero servers.

SafeChat routes every inference call to one of two local runtimes — both powered by Loc.ai Infrastructure. Loc.ai handles auth and model metadata only. Conversation and document content are never transmitted.

Loc.ai Link · localhost

A lightweight desktop agent that turns the user's machine into a local inference server. Auto-discovered by SafeChat — no port to type, no URL to paste.

WebGPU · in-browser

Quantised sub-8B models (Phi-3 Mini, Gemma 2B, Mistral 7B Q4, Llama 3.1 8B Q4) run via WebLLM. Cached in IndexedDB after first download. WASM/CPU fallback for unsupported browsers. Use it when you can't install anything.

◆ Now onboarding design partners

Get SafeChat before general release.

We're opening SafeChat to a small cohort of regulated teams — ahead of public launch. Early access includes direct engineering support, hands-on onboarding for Loc.ai Link, and influence over the roadmap.

Who it's for

Every team. Every prompt.

SafeChat is built for the teams that can't ship sensitive work through a public model — and the leaders who finally want to say yes.

Legal review. Financial analysis. Patient context. Vendor contracts. Anything you'd otherwise redact, summarise by hand, or skip entirely.

Your data.

Your jurisdiction.

Your sovereignty.

Inference never crosses a border you didn't set. SafeChat runs where your device runs — UK, EU, US, air-gapped lab, or a plane over the Atlantic. Compliance posture is decided by your hardware, not a vendor's region picker.

SOC2 · GDPR · HIPAA-ready

Compliant by default — no DPIA for prompt content.

No data residency debate

Data stays on the device. There is nothing to residency.

Zero telemetry by design

Auth and model metadata only. Conversations never sync.

◆ Compliant by default

Private AI.

For everyone in your organisation.

Sign in with Loc.ai and you're chatting on-device in seconds. No model setup. No port numbers. No prompt ever leaves the browser.

v0.1.0 · MVP · SOC2 · GDPR · HIPAA-ready